✨ Introduction

Mental health care is evolving—and fast. At the center of this transformation is artificial intelligence. From AI-powered chatbots to advanced emotional tracking systems, mental health technology is reshaping how people access support (Bendig, Erb, Schulze-Thuesing, & Baumeister, 2019).

What once required a therapist’s office can now happen through a smartphone. This shift has made digital mental health tools more accessible than ever. People are turning to AI therapy for convenience, affordability, and privacy (Inkster, Sarda, & Subramanian, 2018).

But as AI becomes more integrated into our emotional lives, a deeper question arises: How is it changing us?

The conversation around AI and mental health often highlights its benefits. Yet, research suggests there are also subtle risks. This includes the hidden psychological effects of talking to AI, which can influence emotional behavior and social interaction over time (Fang, Peng, & Zhang, 2025).

🧩 Understanding AI and Mental Health

What Is AI in Mental Health?

Artificial intelligence in mental health refers to systems designed to simulate human interaction and provide emotional support. These tools form the foundation of modern AI therapy, offering structured conversations and coping strategies (Fitzpatrick, Darcy, & Vierhile, 2017).

They include:

- Chatbots that mimic therapeutic dialogue

- Mood-tracking apps

- Virtual companions

This growing field is part of a larger shift toward digital mental health, where technology makes emotional support more accessible (Laranjo et al., 2018).

Why AI Is Gaining Popularity in Mental Health Care

The rise of online therapy trends 2026 reflects a shift toward digital-first care.

Key reasons include:

- Accessibility in underserved areas (Li, Peng, & Rheu, 2024)

- Lower costs compared to traditional therapy

- Reduced stigma

- 24/7 availability

Current Applications and Technologies

Modern mental health technology relies on:

- Natural Language Processing (NLP)

- Machine learning

- Emotion recognition systems

These advancements power what researchers call chatbot psychology, which explores how humans emotionally interact with AI systems (Shu, Lai, & He, 2026).

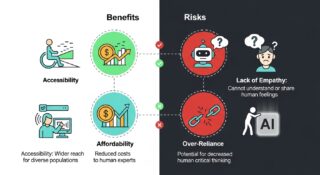

🌟 Benefits of AI and Mental Health Support

Increased Accessibility to Mental Health Resources

AI has made mental health support more inclusive.

For individuals without access to therapists, digital mental health tools offer a practical alternative. They remove barriers like distance, cost, and long wait times (Inkster et al., 2018).

At the same time, combining AI tools with trusted human-centered platforms can provide deeper support. Platforms like Umeed-e-Sukoon (https://umeedesukoon.com/) focus on emotional well-being, awareness, and guidance—helping individuals access reliable mental health resources beyond automated systems.

This balanced approach strengthens outcomes by blending AI therapy with real human insight (Bendig et al., 2019).

Cost-Effective Alternatives to Traditional Therapy

One of the biggest advantages of AI therapy is affordability.

Many AI tools are free or low-cost, making mental health support more accessible to a wider population (Fitzpatrick et al., 2017).

Personalized and Data-Driven Insights

AI systems adapt to user behavior.

They can:

- Identify emotional patterns

- Suggest personalized coping strategies

- Offer behavior-based recommendations

This makes mental health technology more dynamic and user-focused (Laranjo et al., 2018).

Immediate Emotional Support and Crisis Assistance

AI provides instant responses.

Users can:

- Access calming techniques

- Talk through stress in real time

- Receive guided exercises

While helpful, AI should always complement—not replace—professional care (Li et al., 2024).

⚠️ Risks and Limitations of AI in Mental Health

Lack of Human Empathy and Emotional Depth

AI can simulate empathy but does not truly feel emotions.

This limitation affects AI and human behavior, especially in complex emotional situations where human understanding is essential (Shu et al., 2026).

Privacy and Data Security Concerns

Mental health data is highly sensitive.

Users should be cautious about:

- Data storage practices

- Privacy policies

- Platform credibility

These concerns are part of broader AI mental health risks (Fang et al., 2025).

Over-Reliance on AI Tools

Convenience can lead to dependency.

Some users may:

- Prefer AI over real conversations

- Avoid seeking professional help

- Develop emotional reliance

Maintaining balance is essential (Chu, Min, & Park, 2025).

🧠 Hidden Psychological Effects of Talking to AI

The psychological effects of AI often develop gradually and can go unnoticed (Fang et al., 2025).

Emotional Attachment to AI Systems

A major concern is emotional attachment to AI.

Users may begin to:

- Form bonds with AI

- Treat it as a confidant

- Prefer it over human interaction

This reflects core principles of chatbot psychology (Shu et al., 2026).

Altered Communication Patterns and Social Skills

AI conversations are predictable and non-judgmental.

Over time, this can influence real-life interactions, subtly shaping AI and human behavior (Chu et al., 2025).

Reinforcement of Cognitive Biases

AI often validates user input.

This can:

- Reinforce negative thinking

- Limit exposure to new perspectives

- Reduce critical thinking (Fang et al., 2025).

The Illusion of Being Understood

AI creates a strong sense of emotional validation.

However, this is based on algorithms—not genuine understanding (Shu et al., 2026).

Dependency and Escapism

One of the most overlooked AI mental health risks is dependency.

Some users may rely on AI as an emotional escape, avoiding real-life challenges. These patterns highlight the deeper, hidden psychological effects of talking to AI (Yuan, Zhao, & Liu, 2025).

🔍 Ethical Considerations in AI and Mental Health

Responsibility of Developers and Organizations

Developers must design ethical systems that:

- Ensure user safety

- Maintain transparency

- Avoid misleading emotional realism (Fang et al., 2025).

Informed User Consent and Awareness

Users should understand:

- They are interacting with AI

- How their data is used

- The limitations of AI tools (Li et al., 2024).

Balancing Innovation with Human Care

Understanding the virtual therapist pros and cons is key:

Pros: Accessibility, affordability, convenience

Cons: Lack of emotional depth, risk of dependency

Combining AI with human support is essential. Trusted platforms like Umeed-e-Sukoon (https://umeedesukoon.com/) provide guidance and awareness, helping users balance AI therapy with real human connection (Inkster et al., 2018).

Real-Life Example:

Rehan, a college student, began chatting with an AI companion daily for emotional support. Over time, he started sharing personal thoughts with the AI more than with his friends. While the AI provided consistent validation, Rehan noticed a decline in face-to-face interactions. Through counseling offered by Umeed-e-Sukoon, he learned strategies to balance digital support with human connections, mitigating potential emotional dependency (Shu, Lai, & He, 2026; Fang, Peng, & Zhang, 2025).

🛠️ Best Practices for Using AI in Mental Health

Combining AI with Professional Therapy

AI works best as a supplement.

Use it for:

- Daily emotional check-ins

- Support between therapy sessions

- Habit tracking

At the same time, integrating human-led support platforms such as Umeed-e-Sukoon (https://umeedesukoon.com/) helps maintain emotional balance and prevent over-reliance on AI systems (Bendig et al., 2019).

AI has made mental health support more inclusive.

For individuals without access to therapists, digital mental health tools offer a practical alternative. They remove barriers like distance, cost, and long wait times.

However, combining AI tools with trusted human-led platforms can provide even better outcomes. For example, platforms like Umeed-e-Sukoon—a dedicated mental health support initiative—offer guidance, awareness, and access to emotional well-being resources alongside digital solutions. You can explore their services here:

👉 https://umeedesukoon.com/

This hybrid approach—blending AI therapy with human-centered care—ensures that users receive both convenience and genuine emotional support.

Setting Healthy Boundaries with AI Tools

To avoid negative psychological effects of AI:

- Limit usage

- Maintain real-world relationships

- Avoid emotional dependency (Chu et al., 2025).

Choosing Reliable and Ethical AI Platforms

Select tools that are:

- Research-backed

- Transparent

- Secure (Li et al., 2024).

🔮 The Future of AI and Mental Health

Advancements in Emotional Intelligence in AI

AI is becoming more advanced in simulating emotional responses.

Future systems may better understand context and nuance, improving interaction quality (Shu et al., 2026).

Integration with Healthcare Systems

AI is expected to play a larger role in healthcare by:

- Supporting therapists

- Monitoring patient progress

- Enhancing treatment plans

These developments align with evolving online therapy trends 2026 (Li et al., 2024).

Addressing the Hidden Psychological Effects of Talking to AI

Future research will focus on:

- Ethical safeguards

- User education

- Encouraging real-world interaction (Fang et al., 2025).

❓ Frequently Asked Questions (FAQs)

Is AI therapy effective?

Yes, AI therapy can help with mild to moderate mental health concerns. However, it should complement—not replace—professional care (Fitzpatrick et al., 2017).

What are the psychological effects of AI on users?

They include emotional attachment, dependency, and changes in communication patterns—key aspects of the psychological effects of AI (Fang et al., 2025).

What are the virtual therapist pros and cons?

Pros: Accessibility, affordability, instant support

Cons: Limited empathy, dependency risks (Shu et al., 2026).

Are there risks in using digital mental health tools?

Yes. Common AI mental health risks include privacy concerns and emotional reliance (Chu et al., 2025).

How can I safely use AI for mental health?

Use it in moderation, combine it with human interaction, and rely on trusted platforms like Umeed-e-Sukoon (https://umeedesukoon.com/) for balanced support (Inkster et al., 2018).

🧾 Conclusion

Artificial intelligence is transforming mental health care. Through AI therapy and modern mental health technology, support is now more accessible than ever (Bendig et al., 2019).

But this progress comes with responsibility.

The hidden psychological effects of talking to AI—including emotional attachment, dependency, and behavioral changes—remind us that technology should enhance, not replace, human connection (Fang et al., 2025).

The future of AI and mental health lies in balance.

Use AI wisely. Stay connected to real people. And combine digital tools with trusted human-centered platforms like Umeed-e-Sukoon (https://umeedesukoon.com/) to ensure truly meaningful mental well-being.

📚 References

Bendig, E., Erb, B., Schulze-Thuesing, L., & Baumeister, H. (2019). The next generation: Chatbots in clinical psychology and psychotherapy to foster mental health—A scoping review. JMIR Mental Health, 6(2), e12121. https://doi.org/10.2196/12121

Fitzpatrick, K. K., Darcy, A., & Vierhile, M. (2017). Delivering cognitive behavior therapy to young adults with symptoms of depression and anxiety using a fully automated conversational agent (Woebot): A randomized controlled trial. JMIR Mental Health, 4(2), e19. https://doi.org/10.2196/mental.7785

Inkster, B., Sarda, S., & Subramanian, V. (2018). An empathy-driven, conversational artificial intelligence agent (Wysa) for digital mental well-being: Real-world data evaluation. JMIR mHealth and uHealth, 6(11), e12106. https://doi.org/10.2196/12106

Laranjo, L., Dunn, A. G., Tong, H. L., Kocaballi, A. B., Chen, J., Bashir, R., … & Lau, A. Y. S. (2018). Conversational agents in healthcare: A systematic review. Journal of the American Medical Informatics Association, 25(9), 1248–1258. https://doi.org/10.1093/jamia/ocy072

Li, L., Peng, W., & Rheu, M. (2024). Factors predicting the adoption of AI chatbots for mental health support. Telemedicine and e-Health. Advance online publication. https://doi.org/10.1089/tmj.2023.0313

Shu, C., Lai, K., & He, L. (2026). Human-AI attachment: Understanding emotional bonding with artificial intelligence systems. Frontiers in Psychology. Advance online publication.

Fang, C. M., Peng, W., & Zhang, M. (2025). How AI and human behaviors shape psychosocial effects of chatbot use. arXiv Preprint. https://arxiv.org/abs/2503.17473

Chu, M. D., Min, J., & Park, S. (2025). Illusions of intimacy: Emotional attachment and risks in human-AI relationships. arXiv Preprint. https://arxiv.org/abs/2505.11649

Yuan, Y., Zhao, X., & Liu, Q. (2025). Mental health impacts of AI companions: A longitudinal study. arXiv Preprint. https://arxiv.org/abs/2509.22505